Millisecond delays in market data access can erode trading returns and distort investment decisions. While many platforms claim real-time delivery, actual latency varies dramatically from sub-10ms institutional feeds to multi-second consumer delays during volatility spikes. This guide walks you through acquiring, implementing, and maintaining reliable low-latency market data streams in 2026. You’ll learn which API architectures minimize gaps, how to handle trading halts without losing data integrity, and practical methods to verify your pipeline delivers genuine real-time performance across equities, crypto, and derivatives markets.

Table of Contents

- Preparing For Real-Time Market Data Integration

- Building And Executing A Low-Latency Real-Time Data Pipeline

- Troubleshooting Common Real-Time Data Challenges

- Interpreting And Applying Real-Time Data For Trading And Investment Decisions

- Explore Real-Time Market Data Solutions At Handy.Markets

- Frequently Asked Questions

Key takeaways

| Point | Details |

|---|---|

| WebSocket push APIs deliver continuous data with minimal latency compared to polling methods | Push architecture maintains order book integrity and reduces gaps during high-volume periods |

| Trading halts and volatility spikes require event monitoring and adaptive throttling | Backfill queues and status channels help restore data continuity after market interruptions |

| Level 2 order book depth provides critical context beyond top-of-book quotes | Combining trades, quotes, and depth data creates robust pipelines for informed execution |

| Execution timing directly impacts alpha capture in short-term trading strategies | Delays of milliseconds can significantly reduce returns in high-frequency and algorithmic approaches |

| Different asset classes demand tailored data feeds and monitoring protocols | Equities use SIP feeds, crypto relies on SBE streams, options require Greeks and volatility surfaces |

Preparing for real-time market data integration

Before connecting to live market streams, you need the right infrastructure and understanding of available data channels. Real-time market data is delivered via low-latency APIs using WebSocket streaming for push updates, REST for snapshots, with providers like FMP, Alpaca, and Eulerpool offering sub-10ms latency for institutional-grade feeds. Consumer-grade platforms often introduce 3-5 second delays, particularly during volatility spikes, making them unsuitable for timing-sensitive strategies.

Your technical requirements start with reliable internet connectivity capable of sustaining persistent WebSocket connections without packet loss. You’ll need software clients or libraries that handle WebSocket protocols natively, API credentials from your chosen data provider, and sufficient processing capacity to handle message rates that can exceed thousands per second during market opens or news events. Understanding the distinction between snapshot data (point-in-time via REST) and streaming data (continuous via WebSocket) helps you choose the right approach for your use case.

Data channels vary by what information they deliver. Trade channels report executed transactions with price, volume, and timestamp. Quote channels stream bid/ask spreads as they update. Order book channels (Level 2 depth) show multiple price levels beyond the best bid/offer, revealing supply and demand dynamics that inform execution decisions. Asset classes require different protocols: Equities via SIP (US), crypto SBE streams; options need Greeks and volatility surfaces. When tracking financial markets across multiple asset types, you’ll often need separate connections optimized for each class.

Essential data channels by priority:

- Trades: Actual execution prices and volumes forming the official price history

- Quotes: Best bid/offer spreads updating in real time as market participants adjust orders

- Order book depth: Multiple price levels showing where large buy/sell interest concentrates

- Event status: Trading halt notifications, auction periods, and market state changes

Pro Tip: Start with a single asset class and master its data nuances before expanding. Equities and crypto have fundamentally different market microstructures that affect how you interpret streaming data.

| Data Type | Latency Target | Update Frequency | Best Use Case |

|---|---|---|---|

| WebSocket trades | 5-15ms | Every execution | Real-time price discovery and volume analysis |

| WebSocket quotes | 5-15ms | Every order book change | Spread monitoring and execution timing |

| REST snapshots | 100-500ms | On-demand polling | Periodic checks and system initialization |

| Level 2 depth | 10-25ms | Every depth change | Order flow analysis and large order detection |

Building and executing a low-latency real-time data pipeline

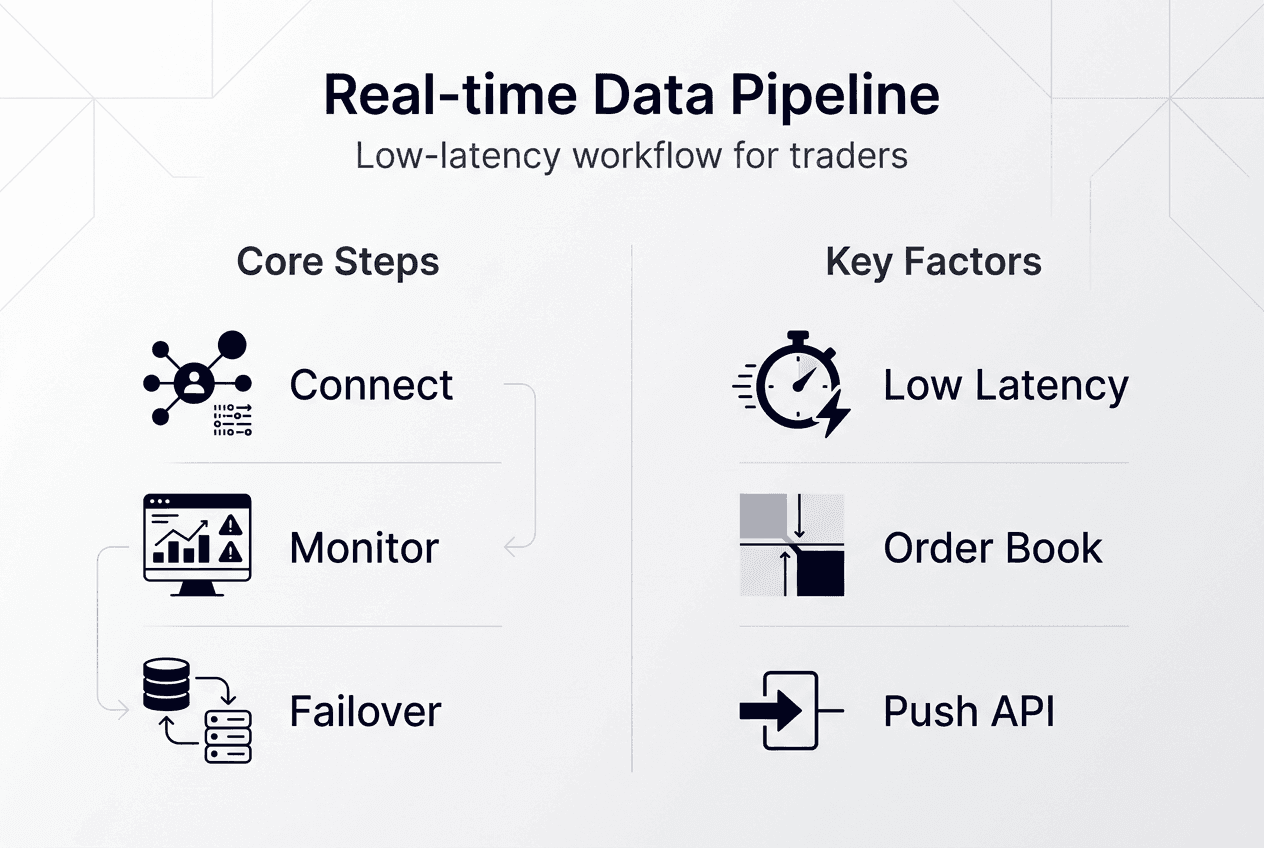

WebSocket push architecture outperforms polling by maintaining persistent connections that deliver data immediately as market events occur. Polling introduces artificial delays between your requests and market changes, creating gaps where price movements go unobserved. Push APIs eliminate this lag by streaming every trade, quote update, and order book change the moment exchanges publish them.

Your pipeline implementation follows this sequence. First, establish a WebSocket connection to your data provider’s streaming endpoint using proper authentication. Second, subscribe to specific channels for your target instruments (trades, quotes, depth). Third, verify data consistency by comparing message timestamps against your system clock to measure actual latency. Fourth, monitor order book state against event channels to catch discrepancies during halts or technical issues.

Use push APIs over polling, monitor order book depth, event states during halts; volatility spikes widen spreads, require throttling to prevent downstream system overload. Trading halts create data gaps that can corrupt your order book state if not handled properly. Event status channels broadcast halt notifications and resumption signals. Implement backfill queues that request missing data once trading resumes, ensuring your book reflects accurate post-halt state before executing any orders.

Volatility spikes generate message rates that can overwhelm processing capacity. Adaptive throttling selectively samples high-frequency updates during extreme conditions while preserving critical events like large trades or significant spread changes. This prevents your pipeline from falling behind real-time during the exact moments when timely data matters most. When monitoring live stock quotes and real-time crypto prices, implement separate throttling thresholds since crypto markets often sustain higher baseline message rates.

Pipeline implementation steps:

- Initialize WebSocket connection with authentication credentials and heartbeat monitoring

- Subscribe to required channels (trades, quotes, depth) for target instruments

- Validate message timestamps against system time to measure end-to-end latency

- Implement reconnection logic with exponential backoff for connection failures

- Monitor event channels for halt notifications and trigger backfill procedures

- Log all state transitions and data gaps for post-analysis and quality verification

Pro Tip: Implement dual connections to primary and backup data providers. Automatic failover ensures continuity if one feed experiences technical issues or elevated latency.

| Approach | Latency | Data Gaps | Implementation Complexity | Cost Efficiency |

|---|---|---|---|---|

| WebSocket push | 5-15ms | Minimal with proper halt handling | Medium (persistent connection management) | High (continuous data per connection) |

| REST polling | 100ms-5s | Significant (misses events between polls) | Low (simple HTTP requests) | Low (pay per request) |

| Hybrid (push + snapshot) | 5-15ms core, 500ms verification | Minimal (snapshots validate state) | High (coordinate two systems) | Medium (optimizes both approaches) |

Execution timing is critical; close-to-open delay erodes alpha in strategies relying on precise entry and exit points. Your pipeline must deliver data fast enough that your decision logic and order routing complete before market conditions change. For high-frequency approaches, every millisecond counts. For swing trading, seconds matter less, but consistent delivery without gaps remains essential for accurate signal generation.

Troubleshooting common real-time data challenges

Latency realities require understanding that ‘real-time’ means a few milliseconds delay; consumer feeds can lag seconds during volatility. Institutional feeds typically deliver 5-15ms from exchange event to your application. Consumer platforms like Yahoo Finance introduce 3-5 second delays during normal conditions, expanding to 10+ seconds when markets move rapidly. This makes consumer feeds unsuitable for any strategy where execution timing affects profitability.

Common pipeline issues include brief data gaps during trading halts, state misalignment between API message types, and latency spikes during market opens or major news releases. Trading halts interrupt the normal flow of trades and quotes. Without proper event monitoring, your order book can become stale, showing pre-halt prices while the market has moved significantly. Event status channels broadcast halt start and end notifications. Your pipeline should pause order book updates during halts and request snapshot data once trading resumes to resynchronize state.

Message ordering problems occur when trades and quotes arrive out of sequence due to network variability. Each message includes an exchange timestamp and a sequence number. Your processing logic should buffer messages briefly and reorder by sequence number before updating internal state. This prevents temporary inconsistencies where a quote update reflects a price level that doesn’t align with the most recent trade.

WARNING: No market data feed delivers zero latency. Physics and network infrastructure impose minimum delays. Design your strategies accounting for 5-15ms institutional latency or 1-5 second consumer latency. Assuming instant data leads to systematic execution errors and unexpected slippage.

Verifying data quality requires continuous monitoring of several metrics. Track message arrival timestamps against exchange timestamps to measure actual latency. Monitor message sequence numbers for gaps indicating lost packets or connection issues. Compare your order book state against periodic REST snapshots to detect drift. Log all anomalies with enough context to diagnose root causes during post-analysis. When monitoring market data latency, establish baseline performance metrics during normal conditions so you can quickly identify degradation.

Data quality verification checklist:

- Timestamp comparison: Exchange time vs. arrival time should stay within expected latency range

- Sequence gap detection: Missing sequence numbers indicate lost messages requiring backfill

- Order book sanity checks: Best bid should never exceed best ask; spreads should stay reasonable

- Volume consistency: Cumulative trade volume should match exchange-reported totals

- Heartbeat monitoring: Periodic ping/pong messages confirm connection health

Interpreting and applying real-time data for trading and investment decisions

Millisecond latency differences create measurable alpha advantages in short-term trading strategies. Execution speed is the primary alpha killer; delay erodes returns significantly because market conditions change continuously. A 10ms advantage in observing a price move and executing a response can mean capturing a favorable price before it adjusts. High-frequency strategies depend entirely on this speed edge, while even swing traders benefit from executing at intended prices rather than slipping to worse levels.

Integrating real-time data into trading systems requires feeding streaming quotes, trades, and order book changes into your decision logic. Automated systems subscribe to relevant channels and trigger buy/sell signals based on predefined conditions like spread compression, volume surges, or price breakouts. Manual traders use real-time dashboards showing live order books and trade flows to inform discretionary decisions. Both approaches demand data delivery fast enough that your actions remain relevant to current market conditions.

Current AI and large language models struggle with live streaming data evaluation. These systems excel at analyzing historical patterns but lack the architecture to process continuous real-time feeds and generate immediate trading signals. Traditional algorithmic approaches using rule-based logic or statistical models remain more effective for real-time decision making. AI can assist with pattern recognition in historical data to inform strategy design, but execution relies on conventional systems optimized for low-latency processing.

Avoiding overreliance on delayed consumer feeds during execution prevents systematic slippage. Use consumer platforms for research and analysis where exact timing doesn’t matter, but switch to institutional-grade real-time feeds when placing orders. The cost difference often pays for itself through improved execution quality. Setting up ETF real-time price alerts helps you monitor multiple instruments simultaneously without manually checking each position.

Optimal real-time data applications:

- Market making: Continuously update bid/ask quotes based on order book depth and recent trades

- Momentum trading: Detect price breakouts and volume surges within seconds of occurrence

- Arbitrage: Identify price discrepancies across venues before they close

- Risk management: Monitor position exposure and market volatility in real time to adjust hedges

- Execution optimization: Time order placement to minimize market impact and slippage

Pro Tip: Backtest your strategies using historical tick data with realistic latency assumptions. Simulating instant execution with perfect data creates unrealistic performance expectations that live trading won’t match.

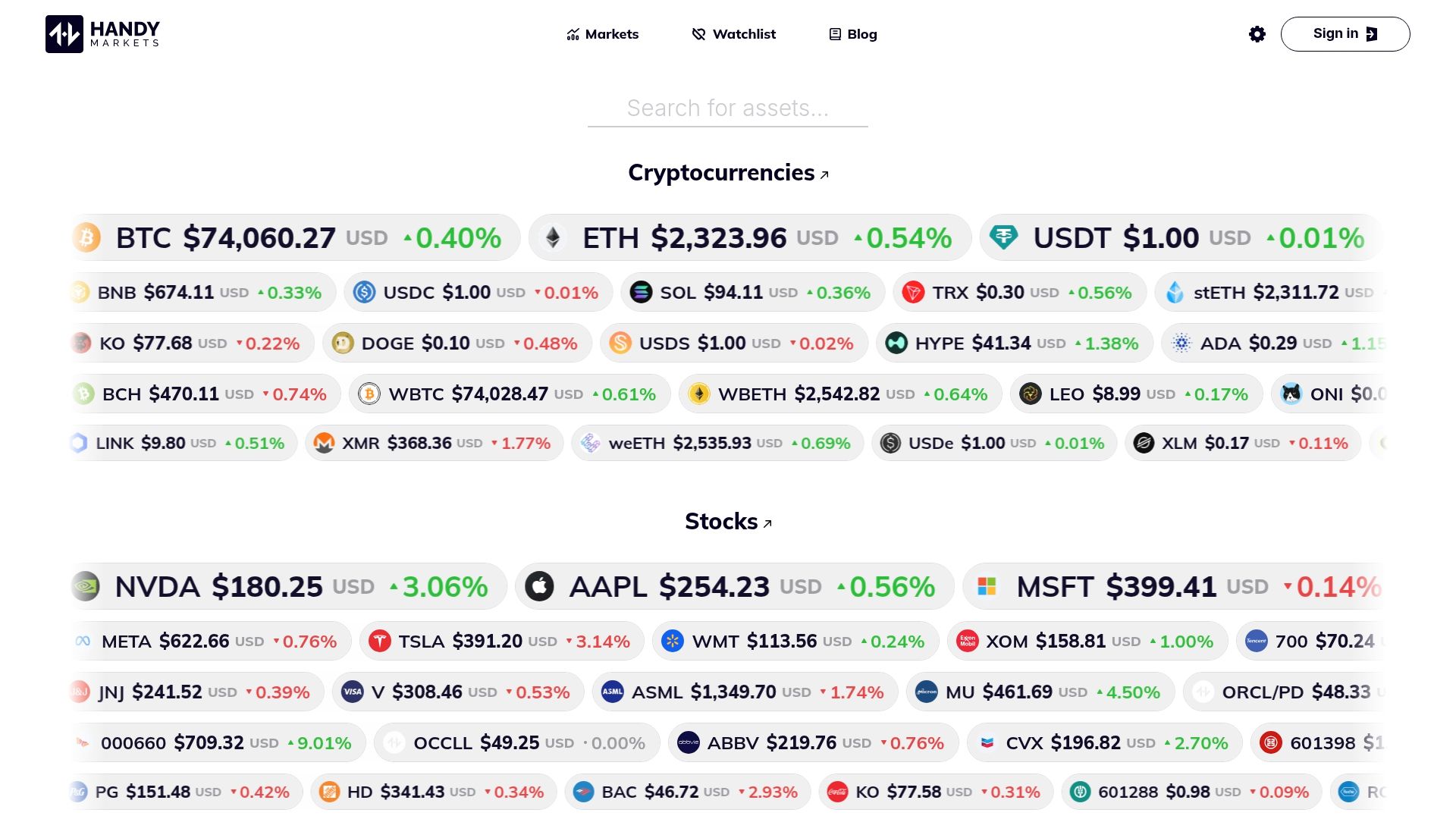

Explore real-time market data solutions at Handy.Markets

After understanding how to build and maintain low-latency data pipelines, you need a platform that delivers comprehensive real-time coverage across asset classes. Handy.Markets provides live pricing for financial markets including crypto, stocks, forex, and ETFs with minimal latency. The platform aggregates data from multiple sources, giving you a unified view of market movements without managing separate feeds for each asset type.

Customizable alerts let you monitor specific price levels, percentage changes, or volume thresholds across your watchlist. Notifications deliver via Telegram, Discord, Slack, SMS, webhook, or email, ensuring you catch critical movements regardless of where you’re working. Live stock quotes and charts update continuously, while real-time cryptocurrency data covers major exchanges and trading pairs. This multi-asset approach aligns with the professional monitoring needs described throughout this guide, providing the real-time visibility essential for informed trading decisions.

FAQ

What is the difference between real-time and delayed market data?

Real-time data arrives within milliseconds of exchange events, typically 5-15ms for institutional feeds. Delayed data can lag seconds to minutes, with consumer platforms often showing 15-minute delayed quotes or 3-5 second delays during volatility. Real-time feeds require WebSocket streaming connections and low-latency infrastructure, while delayed data uses simpler polling or periodic updates. For long-term investing where exact entry timing matters less, delayed data suffices. High-frequency and short-term strategies require genuine real-time delivery to capture intended prices and avoid systematic slippage.

How do trading halts affect real-time data feeds?

Trading halts create data gaps and potential state misalignment in your order book. Trades and quotes stop flowing during the halt period, but your system must recognize this pause rather than assuming stale data remains valid. Event status channels broadcast halt notifications and resumption signals. Implementing backfill queues that request snapshot data once trading resumes restores accurate order book state. Without proper halt handling, your pipeline may execute orders based on pre-halt prices that no longer reflect current market conditions.

Which asset classes need specialized real-time data considerations?

Equities typically stream via Securities Information Processor (SIP) feeds in the US, consolidating data from multiple exchanges. Crypto markets use Simple Binary Encoding (SBE) protocols with higher baseline message rates due to 24/7 trading. Options require additional data like implied volatility, Greeks (delta, gamma, theta, vega), and volatility surfaces beyond simple price quotes. Each asset class demands tailored monitoring for halts, volatility patterns, and data channel priorities. Forex operates continuously across global sessions, requiring timezone-aware processing and understanding of liquidity patterns that vary by trading hours.

Recommended

- Financial Markets: Track Crypto, Stocks, Forex, Indices, Commodities & ETFs Prices With Alerts | Handy.Markets

- Mastering Volatility In Investing: A Beginner’s Guide To Navigating Market Swings In 2025 | Handy.Markets

- Financial Markets For Everyone | Handy.Markets

- Trusta.AI (TA) Price Alerts & Chart | Set Instant Notifications | Handy.Markets